My last post was about doing linear regression with 1 variable. This post will show that the same process works for multiple variables, and then explain why we represent the problem with matrices.

The only code in this post is calling the functions introduced in the last one. I also use the same examples, so post this will make a lot more sense if you read that one first.

For reference, here is the linear regression function:

(defn linear-regression [x Y a i]

(let [m (first (cl/size Y))

X (add-ones x)]

(loop [Theta (cl/zeros 1 (second (cl/size X))) i i]

(if (zero? i)

Theta

(let [ans (cl/* X (cl/t Theta))

diffs (cl/- ans Y)

dx (cl/* (cl/t diffs) X)

adjust-x (cl/* dx (/ a m))]

(recur (cl/- Theta adjust-x)

(dec i)))))))

Because the regression function works with matrices, it does not need any changes to run a regression over multiple variables.

Some Examples

In the English Premier League, a team gets 3 points for a win, and 1 point for a draw. Trying to find a relationship between wins and points gets close to the answer.(->> (get-matrices [:win] :pts)

reg-epl

(print-results "wins->points"))

** wins->points **

A 1x2 matrix

-------------

1.24e+01 2.82e+00

When we add a second variable, the number of draws, we get close enough to ascribe the difference to rounding error.

(->> (get-matrices [:win :draw] :pts)

reg-epl

(print-results "wins+draws->points"))

** wins+draws->points **

A 1x3 matrix

-------------

-2.72e-01 3.01e+00 1.01e+00

In the last post, I asserted that scoring goals was the key to success in soccer.

(->> (get-matrices [:for] :pts)

reg-epl

(print-results "for->points"))

** for->points **

A 1x2 matrix

-------------

2.73e+00 9.81e-01

If you saw Costa Rica in the World Cup, you know that defense counts for a lot too. Looking at both goals for and against can give a broader picture.

(->> (get-matrices [:for :against] :pts)

reg-epl

(print-results "for-against->pts"))

** for-against->pts **

A 1x3 matrix

-------------

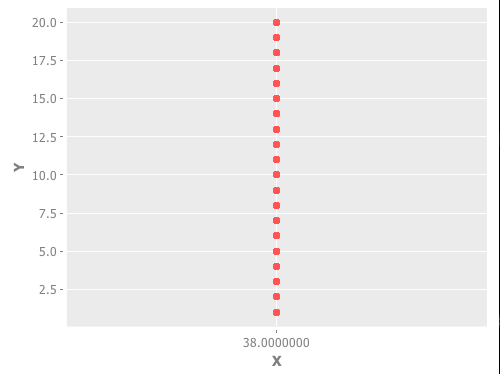

3.83e+01 7.66e-01 -4.97e-01

The league tables contain 20 fields of data, and the code works for any number of variables. Will adding more features (variables) make for a better model?

We can expand the model to include whether the goals were scored at home or away.

(->> (get-matrices [:for-h :for-a :against-h :against-a] :pts)

reg-epl

(print-results "forh-fora-againsth-againsta->pts"))

** forh-fora-againsth-againsta->pts **

A 1x5 matrix

-------------

3.81e+01 7.22e-01 8.26e-01 -5.99e-01 -4.17e-01

The statistical relationship we have found suggests that that goals scored on the road are with .1 points more than those scored at home. The difference in goals allowed is even greater; they cost .6 points at home and only .4 on the road.

Wins and draws are worth the same number of points, no matter where the game takes place, so what is going on?

In many sports there is a “home field advantage”, and this is certainly true in soccer. A team that is strong on the road is probably a really strong team, so the relationship we have found may indeed be accurate.

Adding more features indiscriminately can lead to confusion.

(->> (get-matrices [:for :against :played :gd :for-h :for-a] :pts)

reg-epl

(map *)

(print-results "kitchen sink”))

** kitchen sink **

(0.03515239958218979 0.17500425607459014 -0.22696465757628984 1.3357911841232217 0.4019689136508527 0.014497060396707949 0.1605071956778842)

When I printed out this result the first time, the parameter representing the number of games played displayed as a decimal point with no digit before or after. Multiplying each term by 1 got the numbers to appear. Weird.

The :gd stands for “goal difference” it is the difference between the number of goals that a team scores and the number they give up. Because we are also pulling for and against, this is a redundant piece of information. Pulling home and away goals for makes the combined goals-for column redundant as well.

All of the teams in the sample played the same number of games, so that variable should not have influenced the model. Looking at the values, our model says that playing a game is worth 1.3 points, and this is more important than all of the other factors combined. Adding that piece of data removed information.

Let’s look at one more model with redundant data. Lets look at goals for, against and the goal difference, which is just the difference of the two.

(->> (get-matrices [:for :against :gd] :pts)

reg-epl

(print-results "for-against-gd->pts"))

** for-against-gd->pts **

A 1x4 matrix

-------------

3.83e+01 3.45e-01 -7.57e-02 4.21e-01

points = 38.3 + 0.345 * goals-for - 0.0757 * goals-against + 0.421 * goal-difference

The first term, Theta[0] is right around 38. If a team neither scores nor allows any goals during a season, they will draw all of their matches, earning 38 points. I didn’t notice that the leading term was 38 in all of the cases that included both goals for and against until I wrote this model without the exponents.

Is this model better or worse than the one that looks at goals for and goals against, without goal difference. I can’t decide.

Why Matrices?

Each of our training examples have a series of X values, and one corresponding Y value. Our dataset contains 380 examples (20 teams * 19 seasons).Our process is to make a guess as to the proper value for each parameter to multiply the X values by and compare the results in each case to the Y value. We use the differences between the product of our guesses, and the real life values to improve our guesses.

This could be done with a loop. With m examples and n features we could do something like

for i = 1 to m

guess = 0

for j = 1 to n

guess = guess + X[i, j] * Theta[j]

end for j

difference[i] = guess - Y

end for i

We would need another loop to calculate the new values for Theta.

Matrices have operations defined that replace the above loops. When we multiply the X matrix by the Theta vector, for each row of X, we multiply each element by the corresponding element in Theta, and add the products together to get the first element of the result.

Matrix subtraction requires two matrices that are the same size. The result of subtraction is a new matrix that is the same size, where each element is the difference of the corresponding elements in the original matrices.

Using these two operations, we can replace the loops above with

Guess = X * Theta

Difference = Guess - Y

Clearly the notation is shorter. The other advantage is that there are matrix libraries that are able to do these operations much more efficiently than can be done with loops.

There are two more operations that our needed in the linear regression calculations. One is multiplying matrices by a single number, called a scalar. When multiplying a matrix by a number, multiply each element by that number. [1 2 3] * 3 = [3 6 9].

The other operation we perform is called a transpose. Transposing a matrix turns all of its rows into columns, and its columns into rows. In our examples, the size of X is m by n, and the size of Theta is 1 x n. We don’t have any way to multiply an m by n matrix and a 1 by n matrix, but we can multiply a m by n matrix and an n by 1 matrix. The product will be an m by 1 matrix.

In the regression function there are a couple of transposes to make the dimensions line up. That is the meaning of the cl/t expression. cl is an alias for the Clatrix matrix library.

Even though we replaced a couple of calculations that could have been done in loops with matrix calculations, we are still performing these calculations in a series of iterations. There is a technique for calculating linear regression without the iterative process called Normal Equation.

I am not going to discuss normal equation for two reasons. First, I don’t understand the mathematics. Second the process we use, Gradient Descent, can be used with other types of machine learning techniques, and normal equation cannot.